Using images and deep learning for the identification of high-risk insect species

Images of insects are routinely used for taxonomic identifications and are a fundamental diagnostic tool in any modern biosecurity system. But with increasing border incursions, a changing cohort of species due to expanded trade and climate change, plus the growing taxonomic impediment, this critical aspect of the system threatens to severely impact on the timely detection of exotic pests.

New technological approaches are needed to avoid consequences downstream of the border due to slow species identifications.

Automation of image identity has the potential to alleviate these growing challenges of diagnostic throughput and taxon diversity. Deep learning methods already influence AI use in a wide range of scientific disciplines. The use of models such as convolutional neural networks have been demonstrated to extract features from images and learn to differentiate insect species with an accuracy that is fast approaching that of human experts.

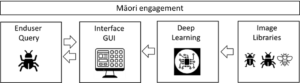

In this project, a cross-disciplinary team of biologists and technologists from Manaaki Whenua Landcare Research and the University of Auckland aim to apply this approach to the exotic pest insect identifications required for routine biosecurity surveillance and incursion responses.

The B3 team will adapt the existing deep learning toolbox as an end user friendly interface for creating and using new entomological models. Open-source Python libraries, frameworks such as TensorFlow, and publicly available prototypes such as those shared on github will be used and an image library developed using specimens in taxonomic collections to train the software and test identification accuracy. Māori engagement, as integral across the project, has been established to help understand the protocols and cultural considerations for storing and handling specimens of key New Zealand species for imaging.

The proof of principle focus is on accuracy of identification of two high priority insect groups, the Queensland fruit fly which is difficult to distinguish from its sibling species and the brown marmorated stink bug, potentially confused with other species already in New Zealand.

The project will transition from application of models trained on images from taxonomic collections to real-world imagery of insects in the field, including in traps. This will tackle a key question about how well images of real-world specimens’ match those in an image library, and what might be necessary to guarantee accuracy of the approach.

Contact Project Leader Darren Ward: [email protected]